Misinformation about the pandemic and effective SARS-CoV-2 health measures has been a major issue in the United States. This resulted in coordinated resistance to everything from mask use to immunizations, which undoubtedly killed lives.

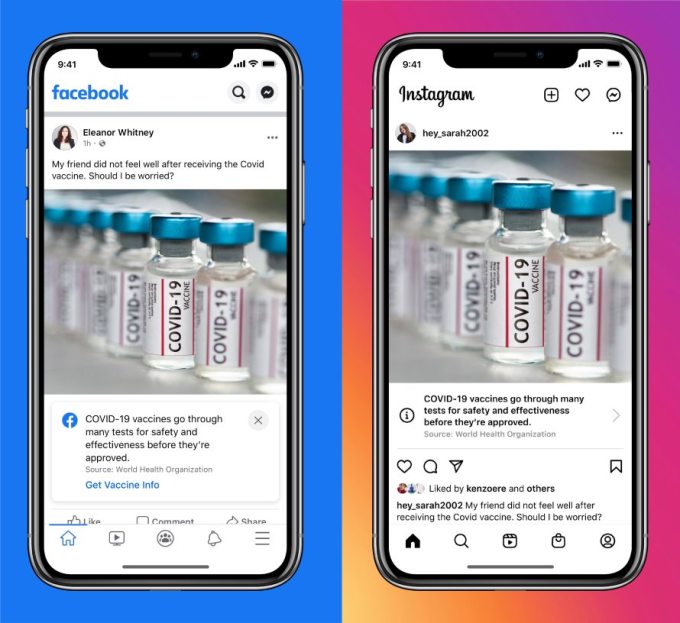

There are other reasons that have contributed to the spread of misinformation, but social media is undoubtedly one of them. While the firms that power the major networks have made attempts to curb the dissemination of misinformation, internal records show that much more might be done.

However, more effective intervention would benefit from a clearer identification of the problems. To that aim, a recent report by the Vaccine Disinformation Network presents data that could be useful. He discovers that the majority of the worst sources of disinformation are probably too tiny to be identified as requiring moderation. The data also suggests that the pandemic has pushed traditional parent groups much closer together with conspiracy theory groups.

Covid-19 network coverage analysis

To conduct this investigation, the researchers engaged have access to an extremely important resource. They investigated Facebook networks that aided in the spread of vaccination disinformation shortly before the epidemic. They subsequently repeated their analyses when widespread disinformation about the outbreak circulated.

Their methodology was simple: they just looked to see if various groups clicked “like” on another group’s landing page. This is a minor action, but it has a significant impact: any post from the liked group has a chance to appear in the likes of the group’s timeline. The exact frequency with which a post appears will vary depending on the unknown qualities of Facebook’s algorithms, but without it, none of the postings would show.

To gain a better understanding of the network generated by these preferences, the researchers classified the groups engaged as pro-vaccine, anti-vaccine, or neither. They then walked in and tagged the groups’ hobbies as “neither,” which might be anything from sports to eating. They then utilized ForceAtlas2 software to create a visual representation of the network. The software would show the network’s nodes – individual Facebook groups – as well as the distances between them based on the strength of their connections. Two connected groups would be closer to each other, and those with connections to the same group would be even closer.

As one might imagine, this algorithm grouped groups with similar interests into a cluster. For example, the generated network diagram clearly illustrates pro- and anti-vaccine groups.

Within the nodes

There were some troubling signs even in the pre-pandemic analysis. The first is that, despite having nearly twice as many members as anti-vaccine groups, pro-vaccine groups tend to fend for themselves. They are closely grouped, indicating that there are several links between them. However, there are few strong relationships to other groups. This shows that vaccine supporters are communicating with one another to a considerable level.

Anti-vaccination organizations have also banded together. However, these organizations were significantly linked to others, particularly those focusing on parenting difficulties. In reality, it is impossible to discern between parent groups and the anti-vaccine community.

So, what has changed since the pandemic began? Many anti-vaccine organizations have expanded their campaign to spread misinformation about the pandemic. Given the intimate relationship between the pandemic and immunizations, this comes as no surprise. However, their interaction with parent groups has not changed significantly during the pandemic.

What has changed is that parent groups have become more involved with individuals interested in alternative health, such as homeopathy and spiritual healing. Homeopathic and healing groups were not expressly anti-vaccine, while having a tenuous relationship with contemporary medicine at best. What concerned researchers was the fact that alternative medicine groups were closely linked to groups devoted to more traditional conspiracy theories and the misinformation that accompanied them. This includes falsehoods regarding climate change, water fluoride safety, and the safety of 5G phone service.

Anti-GMO groups, which have ties to alternative health and frequently think in conspiratorial terms, appear to be a crucial mediator of this connection. These links bring parenting communities closer to conspiracy theorists on the network graph. And many of the alternative medicine articles shared with the parenting community ended up allowing their comments to develop into debates about various conspiracies.

The Boundaries of Moderation

Facebook has made an effort to regulate some of the disinformation that it hosts. This is commonly done with post markup and a message explaining where to find certain information. Furthermore, the researchers discovered tags related to posts made by some of the most powerful anti-vaccine organizations. However, they discovered that many minor organizations escaped Facebook’s notice, presumably because they were too small for algorithms to perceive as a substantial threat. Despite their size, these organisations frequently had major linkages to other groups with autonomous goals.

Meanwhile, a look at the pages of some of the smaller groups reveals that they have contingency plans in place in the event that Facebook takes their moderation seriously. The researchers discovered instances of organizations requesting that their users relocate their discussions to platforms such as Parler, Gab, and Telegram.

The researchers are constructing a mathematical model that summarizes the behavior they saw in an attempt to make moderation easier. They expect that this will aid in identifying the groups that represent the greatest threat of deception. However, with only one piece of data to test it on, its utility is unknown.

In any event, the researchers’ findings are concerning. “During the pandemic, traditional parenting circles on Facebook were subjected to a potent two-pronged disinformation technique,” they conclude. One factor was their previous involvement with anti-vaccine groups; because they focused on pandemic misinformation, parents were exposed to it as well. And, as interest in alternative medicine grew, parents were dragged into a world plagued with conspiracy theories.

Obviously, exposure to random Facebook posts isn’t going to change anyone’s mind. Regular exposure to disinformation, on the other hand, can have a cumulative effect, especially if it is accompanied by the feeling that your peers are expressing it. And, so far, Facebook’s moderation does not appear to be capable of interrupting that.